The plan for this post is to give a brief introduction to verbs in Biblical Hebrew, introduce some vocabulary, and then read the account of the first day of creation in Genesis 1:1-5.

Hebrew verbs can come in several forms that include perfect, imperfect, wayyiqtol, weqatal, infinitive, participle, imperative, jussive, and cohortative.1 In this post I’ll focus mostly on the perfect, imperfect, and wayyiqtol forms. A Hebrew verb can also be inflected according to a derived stem called a binyan, which is something characteristic of Semitic languages. I’ll save that for another time. For now I’ll focus on what is called the qal binyan, which is the most basic form. Hebrew verbs are built on roots. These are usually three consonantal letters. Then we build on these roots by adding certain vowels to the consonants, as well as certain endings or prefixes.

The perfect and imperfect forms indicate the extension or completeness of an action in time. In the perfect form the action is completed and done. In the imperfect form the action is uncompleted or has a repeated, ongoing character.2

Let’s look first at the perfect form. We’ll look at the verb שָׁמַר as an example, שָׁמַר, meaning “watch”, “guard”, or “keep”. The third-person, masculine, singular form is שָׁמַר with a qamatz on the first syllable and a pataḥ on the second syllable, with no ending added. All other person, gender, number combinations have endings added. Here are the perfect forms of the verb שָׁמַר, “to keep”:

שָׁמַרְתִּי – I kept

שָׁמַרְתָּ – You (m) kept

שָׁמַרְתְּ – You (f) kept

שָׁמַר – He kept

שָׁמְרָה – She kept

שָׁמַרְנוּ – We kept

שְׁמַרְתֶּם – You all (m) kept

שְׁמַרְתֶּן – You all (f) kept

שָׁמְרוּ – They kept

First-person, singular has a תִּי- ending, שָׁמַרְתִּי. Second-person, masculine, singular has a תָּ- ending, שָׁמַרְתָּ. Second-person, feminine, singular has a תְּ- ending, שָׁמַרְתְּ. Third-person, masculine, singular is unmarked, no endings added, שָׁמַר. Third-person, feminine, singular has a ה- ending with a hey, שָׁמְרָה. First-person, plural has a נוּ- ending, שָׁמַרְנוּ. Second-person, masculine, plural has a תֶּם- ending, שְׁמַרְתֶּם. Second-person, feminine, plural has a תֶּן- ending, שְׁמַרְתֶּן. And Third-person, plural has an וּ- ending, שָׁמְרוּ.

Now the imperfect form. The imperfect form is used for actions that are ongoing or repeated. In the imperfect form the root is modified with prefixes, as well as endings in some cases. Here is each person, gender, and number for the qal imperfect of שָׁמַר:

אֶשְׁמֹר – I will keep

תִּשְׁמֹר – You (m) will keep

תִּשְׁמְרִי – You (f) will keep

יִשְׁמֹר – He will keep

תִּשְׁמֹר – She will keep

נִשְׁמֹר – We will keep

תִּשְׁמְרוּ – You all (m) will keep

תִּשְׁמֹרְנָה – You all (f) will keep

יִשְׁמְרוּ – They (m) will keep

תִּשְׁמֹרְנָה – They (f) will keep

First-person, singular has an -אֶ prefix, אֶשְׁמֹר. Second-person, masculine, singular has a -תִּ prefix, תִּשְׁמֹר. Second-person, feminine, singular has a -תִּ prefix as well as a י- ending, תִּשְׁמְרִי. Third-person, masculine, singular has a -יִ prefix, יִשְׁמֹר. Third-person, feminine, singular has a -תִּ prefix, תִּשְׁמֹר. First-person, plural has a -נִ prefix, נִשְׁמֹר. Second-person, masculine, plural has a -תִּ prefix and an וּ- ending, תִּשְׁמְרוּ. Second-person, feminine, plural has a -תִּ prefix and a -נָה ending, תִּשְׁמֹרְנָה. Third-person, masculine, plural has a -יִ prefix an וּ- ending, יִשְׁמְרוּ. Third-person, feminine, plural has a -תִּ prefix and a -נָה ending, תִּשְׁמֹרְנָה.

The wayyiqtol and weqatal forms are similar to the imperfect and perfect forms, respectively. They are formed by adding a vav in front of the imperfect and perfect forms, plus a few additional modifications.3 For example, in the wayyiqtol a dagesh is placed in the letter following the vav, in most cases. As an example using the verb שָׁמַר, the third-person, masculine, singular wayyiqtol form is וַיִּשְׁמֹר. The names wayyiqtol and weqatal are conventional grammatical labels based on forms of the model verb קָטַל, “to kill”, which is often used to show verb forms. The wayyiqtol form is also sometimes called the waw-consecutive, the consecutive imperfect, and the converted imperfect. Just other names that are used for it. The wayyiqtol is extremely common, as we will see in Genesis 1.

Shortly we’ll look at Genesis 1 so let’s look at these verb forms using vocabulary from that chapter. Some of the verbs we will see in Genesis 1 have slightly different forms than שָׁמַר. They have features like guttural letters, starting with nun, starting with yud, having only two letters, or having doubled (geminate) letters.

The first verb in the Bible is בָּרָא (bara), “to create”. This verb is what is called either a III-aleph or lamed-aleph verb, which has a few differences in its conjugation. But the general form is quite similar. In Genesis 1:1 this occurs in the perfect in the third-person, masculine, singular form. But we’ll look at the other forms for practice:

בָּרָאתִי – I created

בָּרָאתָ – You (m) created

בָּרָאתְ – You (f) created

בָּרָא – He created

בָּרְאָה – She created

בָּרָאנוּ – We created

בְּרָאתֶם – You all (m) created

בְּרָאתֶן – You all (f) created

בָּרְאוּ – They created

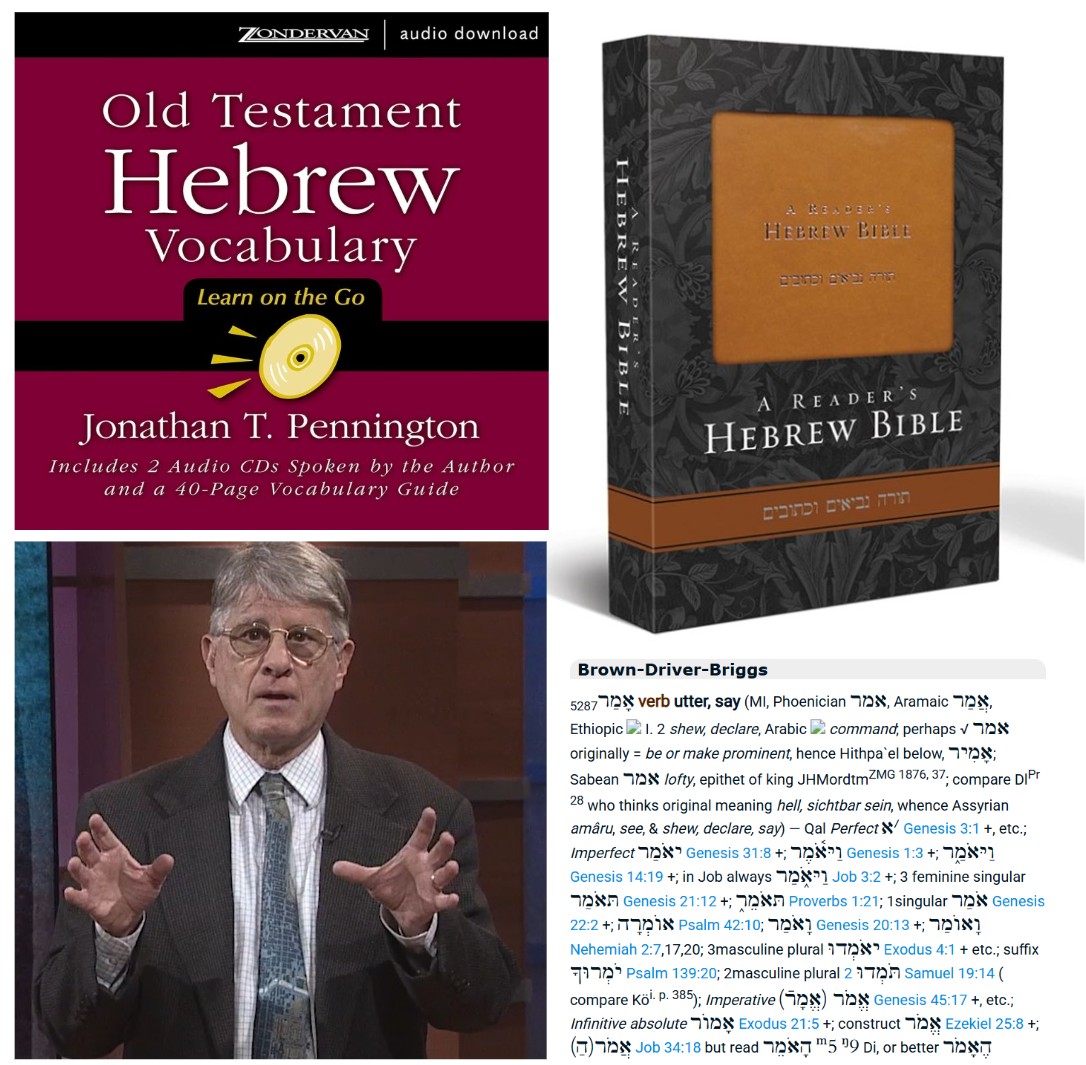

Another verb we’ll see in Genesis 1 is אָמַר (amar), “to say”. This kind of verb is called either a I-aleph or a pey-aleph verb, which also has a few differences in its conjugation. But we can see the same general pattern. In Genesis 1:3 this occurs in the wayyiqtol in the third-person, masculine, singular form. We’ll look at all the person, gender, and number forms of the wayyiqtol for practice:

וָאֹמַר – And I said

וַתֹּאמֶר – And you (m) said

וַתֹּאמְרִי – And you (f) said

וַיֹּאמֶר – And he said

וַתֹּאמֶר – And she said

וַנֹּאמֶר – And we said

וַתֹּאמְרוּ – And you all (m) said

וַתֹּאמַרְנָה – And you all (f) said

וַיֹּאמְרוּ – And they (m) said

וַתֹּאמַרְנָה – And they (f) said

One we will see a lot in Genesis 1 is וַיֹּאמֶר (vayomer), “and he said”.

Before moving on to Genesis 1 let’s introduce some vocabulary that we will see in the chapter:

אֱלֹהִים – God

שָּׁמַיִם – Heavens

אֶרֶץ – Earth

רֵאשִׁית – Beginning

אֵת – Direct object marker

בְּ – In, against

לְ – To, toward

וְ – And, or

הָיָה – To be

עַל – On, upon

פָנִים – Face

רוּחַ – Wind, Spirit

מַיִם – Water

אָמַר – To say

אוֹר – Light

חֹשֶׁךְ – Darkness

רָאָה – To see

בָּדַל – To separate

בֵּין – Between

טוֹב – Good

כִּי – That

קָרָא – To call

יוֹם – Day

לָיְלָה – Night

עֶרֶב – Evening

בֹּקֶר – Morning

אֶחָד – One

With that vocabulary, let’s move on to Genesis chapter 1.

The first chapter of Genesis is one of the most beautiful and awe-inspiring in all of scripture, and in all of world literature. There’s lots of fascinating stuff here. I’d like to look at the Hebrew text and point out some interesting features of the Hebrew language itself as well as consider some intriguing theological details that this chapter has inspired over the centuries. In the following we’ll see the perfect and wayyiqtol verb forms and our vocabulary words. We’ll also see some other interesting grammatical features that I’ll comment on as they come up.

The first verse, Genesis 1:1 reads:

בְּרֵאשִׁ֖ית בָּרָ֣א אֱלֹהִ֑ים אֵ֥ת הַשָּׁמַ֖יִם וְאֵ֥ת הָאָֽרֶץ׃

This can be translated in different ways. Here are the King James Version and New Jewish Publication Society translations.

King James Version: “In the beginning God created the heaven and the earth.”

New Jewish Publication Society: “When God began to create heaven and earth…”

The way the King James Version renders it is the more common way. This is also how it’s translated in the NRSV, NASB, ESV, NIV, and CSB. It describes a completed action. The NJPS translation treats the verse as a subordinate clause describing the circumstances under which creation began. The difference has to do with how to translate the first word, בְּרֵאשִׁית. And this is an opportunity to talk about an interesting feature of Hebrew nouns.

Hebrew nouns can have an absolute form or a construct form. For example, the plural of בֵּן, the word for “son” in its absolute form is בָּנִים. And that’s just “sons” by itself. But if you want to say “sons of” something you have to use the construct form, בְּנֵי. To say “sons of Israel” you don’t say בָּנִים יִשְׂרָאֵל, you say בְּנֵי יִשְׂרָאֵל. Not “sons Israel” but “sons of Israel”. What we translate in English as “of” is denoted by that construct form of the noun. The interesting thing about בְּרֵאשִׁית is that in other instances where this word is used in this form it is often as a construct form, as the beginning of something. For example, as the beginning of someone’s reign (Jeremiah 26:1, Jeremiah 27:1, Jeremiah 28:1, Jeremiah 49:1). Jeremiah 26:1 reads בְּרֵאשִׁ֗ית מַמְלְכוּת יְהֹויָקִים, “In the beginning of the reign of Jehoiakim”. You actually have two construct forms in a row there, both “beginning of” and “reign of”. So there’s an argument that a similar thing is happening in Genesis 1:1, that it’s not just “in the beginning” but “in the beginning of…” A kind of awkward literal translation would be something like “in the beginning of God created” or “in the beginning of God creating”. But a more natural rendering in English is “when God began to create”.4,5,6

So is that right? Well that’s the debate. And it’s not just something modern scholars have brought up recently. This is an ancient debate. The medieval French rabbi Rashi (1040-1105) proposed this reading.7 Theologically and literarily each reading puts things in a slightly different stance. It’s an interesting topic. There’s been a ton of back and forth. The debate goes on and it’s not settled. So I’ll just leave it at that and say that it’s a topic.

The second word is בָּרָא, “he created”. This is an example of the perfect form we looked at earlier. In this verse בָּרָא is in the third person, masculine, singular. “He created”.

The third word is אֱלֹהִים. “God” or sometimes ‘gods’, plural. אֱלֹהִים can be used explicitly to refer to plural gods, such as the gods of other nations. But here אֱלֹהִים is not talking about plural actors. Why do we say that? Because of the form of the previous word, בָּרָא. The form בָּרָא is singular, not plural. It’s בָּרָא אֱלֹהִים not בָּרְאוּ אֱלֹהִים. So אֱלֹהִים is a single actor even though it has a plural ending.

In the rest of the first verse, God created אֵת הַשָּׁמַיִם וְאֵת הָאָֽרֶץ. He created heaven and earth. Note there the use of אֵת, which is a direct object marker, heaven and earth here each being direct objects of God’s creation. Note also that שָׁמַיִם and אֶרֶץ are prefixed with a ה, which is similar to the definite article “the” in English. So a more literal translation like King James will say “the heaven and the earth”. Also note the ו before the second אֵת, which here means “and”. It can also mean “or” in other circumstances.

Verse 2:

וְהָאָ֗רֶץ הָיְתָ֥ה תֹ֙הוּ֙ וָבֹ֔הוּ וְחֹ֖שֶׁךְ עַל־פְּנֵ֣י תְהֹ֑ום וְר֣וּחַ אֱלֹהִ֔ים מְרַחֶ֖פֶת עַל־פְּנֵ֥י הַמָּֽיִם׃

“And the earth was without form, and void; and darkness was upon the face of the deep. And the Spirit of God moved upon the face of the waters.” (KJV)

In this verse we see an instance of the verb הָיָה, “to be”. In this verse it takes the form הָיְתָה, which is the perfect, third person, feminine, singular. It’s in the perfect form so it’s something completed, so we can say it “was”. The earth was תֹהוּ וָבֹהוּ, “tohu va-bohu”, a famous Hebrew phrase. There’s a quite lyrical sound to this pair of words. Robert Alter, in his recent translation of the Hebrew Bible, tried to capture this same lyrical sound in English with “welter and waste”. I quite like that!8

And darkness חֹשֶׁךְ was upon the face of the deep עַל־פְּנֵי תְהֹום. The preposition עַל is “on” or “over”. “Face”, פָנִים, is an interesting word. It’s one of those words in a language that gets used for more than just its literal meaning. A very common word. This is a plural-form noun, which in the absolute is פָנִים but also extremely common to see it in the construct form that I mentioned earlier. The construct form is פְּנֵי. The face of something. So it’s not עַל־פָנִים תְהֹום but עַל־פְּנֵי תְהֹום.

Then there’s this תְהֹום, the deep. תְהֹום has cognates in other Semitic languages. In Akkadian it’s tiāmtum. In the Babylonian creation story, the Enūma Eliš, we see this word in the name of the ocean goddess Tiamat. So תְהֹום was a word with a lot of meaning and association in the wider cultural sphere of the Semitic-speaking world.9

Then there’s a Spirit of God, רוּחַ אֱלֹהִ֔ים. Also translated, in the NRSV for example, as a “wind from God”. As in many languages, the same word can mean “wind”, “breath”, and “spirit”. Even the English word “spirit” is etymologically related to breathing. And so it is with רוּחַ, it’s both “spirit” and “breath”.10 And what was this wind or spirit from God doing? It moved over or swept over the face of the waters. Very interesting verb here, רָחַף. In this verse it’s in a participle form, מְרַחֶפֶת. Not a very common word. Just a few occurrences in the Bible. It’s often translated as “hovering” over the waters. It could even be thought of as “brooding” like a bird. One of the other occurrences of this word רָחַף is in Deuteronomy 32:11 that talks about an eagle that hovers over its young, עַל־גֹּוזָלָיו יְרַחֵף. Then again we see this use of the word for face in its construct form, over the face of the waters, עַל־פְּנֵי הַמָּיִם.

Verse 3:

וַיֹּ֥אמֶר אֱלֹהִ֖ים יְהִ֣י אֹ֑ור וַֽיְהִי־אֹֽור׃

“And God said, Let there be light: and there was light.” (KJV)

In this verse the verb, אָמַר , “to say”, takes on the wayyiqtol form. “And God said”, וַיֹּאמֶר אֱלֹהִים, “Let there be light”, יְהִי אֹור. Here we see the verb הָיָה, “to be” again. This time it’s in what’s called the jussive form. Jussive indicates a desire for something to be the case. It’s not merely indicative, stating that something is, nor is it quite imperative, commanding that something be. But something in between: “Let there be”. “Let there be”, יְהִי, “light”, אֹור.

“And there was light”, וַיְהִי־אֹור. This is another wayyiqtol, וַיְהִי , of the verb הָיָה, “to be”. “And there was light”.

Verse 4:

וַיַּ֧רְא אֱלֹהִ֛ים אֶת־הָאֹ֖ור כִּי־טֹ֑וב וַיַּבְדֵּ֣ל אֱלֹהִ֔ים בֵּ֥ין הָאֹ֖ור וּבֵ֥ין הַחֹֽשֶׁךְ׃

“And God saw the light, that it was good: and God divided the light from the darkness.” (KJV)

And God אֱלֹהִים saw וַיַּרְא. This is a third-person, masculine, singular, wayyiqtol of the verb רָאָה, “to see”. He saw the light, אֶת־הָאֹור. Note the direct object marker, אֶת, and the ה prefix acting like a definite article, “the light”. He saw that, כִּי, it was good, טֹוב. In English we say “that it was good” but in Hebrew the “it is” isn’t necessary and you just say כִּי־טֹוב. You’ve probably heard the expression מזל טוב, “good fortune”. That’s an easy way to remember the word טֹוב for “good”.

And God אֱלֹהִים divided, or separated וַיַּבְדֵּל. Another wayyiqtol. Third-person, masculine, singular of the verb בָּדַל, “to divide” or “separate”. Note again the vav and the yud with a dagesh before the root of the verb. It’s worth noting that this act of dividing is characteristic of God’s creation in Genesis 1. We see this verb בָּדַל five times in the chapter. When God separates the waters above and below the firmament (verses 6 & 7) and when he creates the sun and moon to separate day and night (verses 14 & 18). But we see the concept with other words as well, as when God gathers all the waters together to make dry land (verses 9 & 10) and when he makes humans male and female (verse 27). To me this contrasts with the תֹהוּ וָבֹהוּ, tohu va-bohu at the beginning of the chapter. God is imposing order by separating things into coherent categories.

God makes a separation between בֵּין the light הָאֹור and between וּבֵין the darkness הַחֹשֶׁךְ. The word for “between” here is בֵּין. Note also the vav prefix before the second בֵּין, “and between”. The vav acts as a conjunction “and” as usual but here also takes the form of a shuruq, pronounced “oo”. This happens when the vav prefix comes before certain letters: bet, mem, and pey (labial letters). In this case the bet also loses its dagesh and changes pronunciation. So with בֵּין, “between”, “and between” becomes וּבֵין.

Verse 5:

וַיִּקְרָ֨א אֱלֹהִ֤ים ׀ לָאֹור֙ יֹ֔ום וְלַחֹ֖שֶׁךְ קָ֣רָא לָ֑יְלָה וַֽיְהִי־עֶ֥רֶב וַֽיְהִי־בֹ֖קֶר יֹ֥ום אֶחָֽד׃

“And God called the light Day, and the darkness he called Night. And the evening and the morning were the first day.” (KJV)

The first word, וַיִּקְרָא, is a wayyiqtol of קָרָא, “to call”. “And God called”, וַיִּקְרָא אֱלֹהִים. In this verse we see the preposition ל with lamed, “to”, “toward”. In this verse a lamed preposition is used with both אוֹר, “light” and חשֶׁךְ, “darkness”: לָאוֹר and לַחֹשֶׁךְ. And he called the light, לָאוֹר, day, יוֹם, and he called the darkness, לַחֹשֶׁךְ, night, לָיְלָה. Then we see the verb הָיָה, “to be” again as a wayyiqtol, וַיְהִי , “and it was”. And it was evening, עֶרֶב, and it was morning, בֹקֶר. We can use these two words along with טֹוב, “good”, for some common Hebrew greetings: ערב טוב, “good evening”, and בוקר טוב, “good morning”. And all of this was יֹום אֶחָד, “one day”. Here we see the Hebrew number one: אֶחָד.

Let’s do Genesis 1:1-5 in Hebrew again, all together. Listen for the words and forms that we’ve gone over and see how much you can understand now.

בְּרֵאשִׁ֖ית בָּרָ֣א אֱלֹהִ֑ים אֵ֥ת הַשָּׁמַ֖יִם וְאֵ֥ת הָאָֽרֶץ׃

וְהָאָ֗רֶץ הָיְתָ֥ה תֹ֙הוּ֙ וָבֹ֔הוּ וְחֹ֖שֶׁךְ עַל־פְּנֵ֣י תְהֹ֑ום וְר֣וּחַ אֱלֹהִ֔ים מְרַחֶ֖פֶת עַל־פְּנֵ֥י הַמָּֽיִם׃

וַיֹּ֥אמֶר אֱלֹהִ֖ים יְהִ֣י אֹ֑ור וַֽיְהִי־אֹֽור׃

וַיַּ֧רְא אֱלֹהִ֛ים אֶת־הָאֹ֖ור כִּי־טֹ֑וב וַיַּבְדֵּ֣ל אֱלֹהִ֔ים בֵּ֥ין הָאֹ֖ור וּבֵ֥ין הַחֹֽשֶׁךְ׃

וַיִּקְרָ֨א אֱלֹהִ֤ים ׀ לָאֹור֙ יֹ֔ום וְלַחֹ֖שֶׁךְ קָ֣רָא לָ֑יְלָה וַֽיְהִי־עֶ֥רֶב וַֽיְהִי־בֹ֖קֶר יֹ֥ום אֶחָֽד׃

So to review. We’ve seen the perfect, imperfect, and wayyiqtol verb forms. We’ve seen construct nouns and some prepositions. We’ve learned vocabulary for and read the first five verses of the Bible. I know that this was a firehouse of information. But hopefully it can serve as a launching point for further learning for folks who are interested. I plan to do some similar readings in Genesis while highlighting certain aspects of the language. As well as other parts of the Bible.

References:

- Waltke, Bruce K., and M. O’Connor. An Introduction to Biblical Hebrew Syntax. Winona Lake, IN: Eisenbrauns, 1990.

- Cook, John A. Time and the Biblical Hebrew Verb: The Expression of Tense, Aspect, and Modality in Biblical Hebrew. Winona Lake, IN: Eisenbrauns, 2012.

- Fassberg, Steven E. “The Biblical Hebrew Verb.” In A Handbook of Biblical Hebrew, edited by W. Randall Garr and Steven E. Fassberg, 66–83. Winona Lake, IN: Eisenbrauns, 2016.

- Speiser, E. A. Genesis. Anchor Bible 1. Garden City, NY: Doubleday, 1964.

- Sarna, Nahum M. Genesis. JPS Torah Commentary. Philadelphia: Jewish Publication Society, 1989.

- Hendel, Ronald. “Genesis 1:1–2 as a Hebrew Temporal Clause.” Catholic Biblical Quarterly 63, no. 2 (2001): 193–209.

- Herczeg, Y. (1999). Sapirstein Edition Rashi – 5 Volume Slipcased Set Student Size: The Torah with Rashi’s Commentary Translated, Annotated, and Elucidated.

- Alter, Robert. The Hebrew Bible: A Translation with Commentary. New York: Norton, 2019.

- Heidel, Alexander. The Babylonian Genesis. 2nd ed. Chicago: University of Chicago Press, 1951.

- Koehler, Ludwig, Walter Baumgartner, and Johann Stamm. The Hebrew and Aramaic Lexicon of the Old Testament. Leiden: Brill, 1994–2000.